Google’s Google Antigravity suspension wave has sent a jolt through the AI developer ecosystem, leaving thousands of OpenClaw users abruptly cut off from Gemini model access. What initially looked like a routine backend capacity hiccup quickly spiraled into account restrictions, persistent 403 errors, and growing accusations that Google had overcorrected. And here’s where it gets uncomfortable.

In this article, we break down what happened, how the abuse mechanism worked, who is affected, and what developers should do next to avoid similar bans.

What Happened: Google Suspends Antigravity Access Over OpenClaw Integration

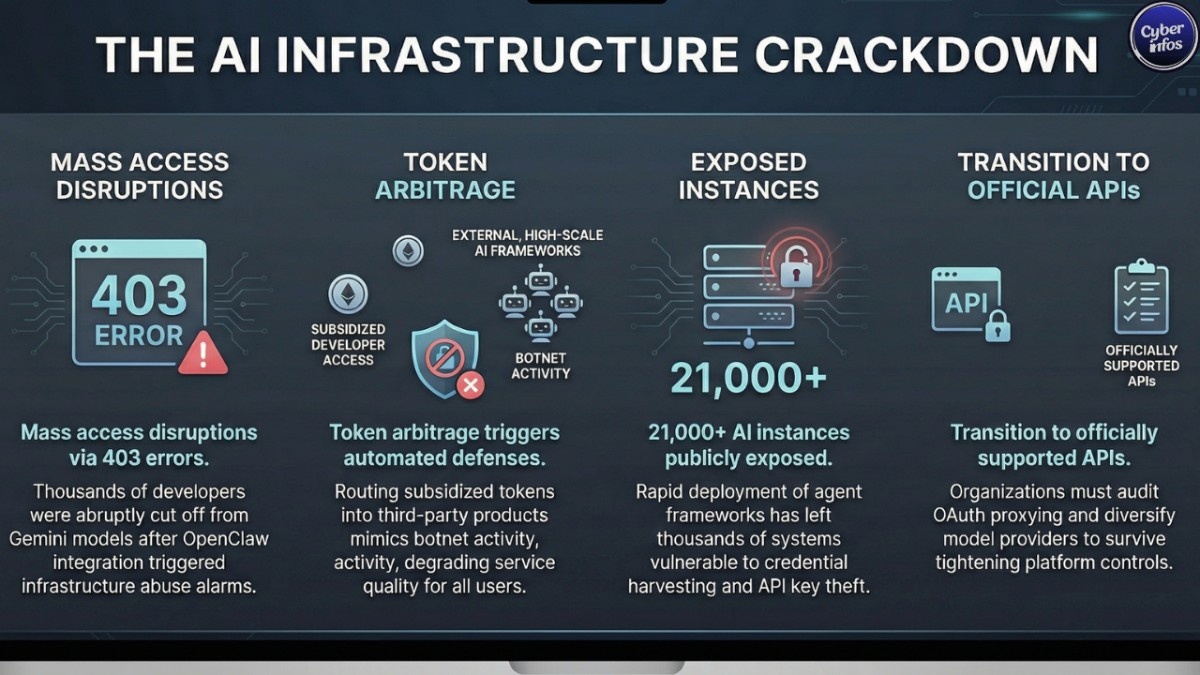

In mid-February 2026, users of OpenClaw began reporting sudden 403 errors while attempting to access Google’s Antigravity developer platform. No warning banners. No graceful degradation. Just hard stops.

OpenClaw, launched in November 2025, exploded in popularity surpassing 219,000 GitHub stars by enabling users to deploy local AI agents capable of handling email workflows, browser automation, and multi-step task orchestration. For developers hungry to experiment with agentic systems, it felt like rocket fuel.

Many authenticated through Google’s Antigravity platform to gain access to premium-tier models such as Gemini 2.5 Pro at subsidized developer pricing. But instead of building directly within Antigravity’s intended framework, usage was routed through OpenClaw’s OAuth plugin. Functionally, that turned Antigravity infrastructure into a backend engine for a third-party product.

That’s the part that triggered alarms.

According to public comments from Varun Mohan, the traffic surge “tremendously degraded the quality of service for our users.” Automated monitoring systems flagged patterns consistent with infrastructure abuse and token arbitrage the kind of activity that, from the backend, can look indistinguishable from bot-driven API scraping.

Google maintains that enforcement actions were scoped only to Antigravity product access for violating accounts. Still, multiple users have reported broader disruptions across Google services. Whether that’s spillover, misconfiguration, or perception is still being debated in developer forums.

The suspension wave landed just as Anthropic updated its policies to explicitly prohibit third-party OAuth integrations that obscure product-level consumption. Different vendor, same direction of travel.

AI platforms are tightening the perimeter. Fast.

How the Vulnerability Works

This wasn’t a traditional breach. No zero-day. No credential stuffing campaign.

It was infrastructure economics colliding with platform policy.

- Developers authenticated to Antigravity using Google accounts.

- OpenClaw’s OAuth plugin retrieved access tokens for Gemini APIs.

- Rather than operating strictly inside Antigravity’s ecosystem, OpenClaw used those tokens to power its independent AI agent framework.

- Automated agent workloads generated sustained backend spikes, degrading shared infrastructure performance.

Think of it this way: imagine buying discounted tickets intended for a developer preview screening then piping that stream into a commercial theater. The ticket was valid. The redistribution model wasn’t.

That’s token arbitrage in action exploiting pricing or access asymmetries between subsidized developer environments and production-grade consumption.

From a security operations perspective, abnormal API bursts triggered enforcement heuristics. Infrastructure abuse patterns often resemble early-stage botnet activity. When automated agents hammer endpoints at scale, automated defense systems don’t pause to ask about developer intent.

They shut it down.

Who Is at Risk?

- Developers relying on OpenClaw’s OAuth integration

- AI Ultra subscribers paying $249.99 per month

- Teams accessing Gemini 2.5 Pro via Antigravity

- Organizations running OpenClaw agents in production pipelines

For individual developers, the damage is inconvenience and lost access. For startups running demos or client pilots, it’s downtime, contractual pressure, and credibility on the line.

Security researchers have flagged more than 21,000 publicly exposed OpenClaw instances vulnerable to configuration harvesting and infostealer campaigns. That means misconfigured agents could expose API keys or local credentials.

It’s sitting on Shodan.

Most teams deploying agent frameworks are moving fast. Compliance reviews tend to lag. That imbalance rarely ends well.

Expert Analysis: Why This Matters

The Google Antigravity suspension marks a visible inflection point in AI governance.

Over the past year, AI vendors aggressively subsidized model access to drive ecosystem adoption. Growth came first. Guardrails came later. But as inference workloads balloon and GPU capacity tightens, the financial calculus changes.

OpenClaw creator Peter Steinberger publicly labeled Google’s move “draconian” and announced plans to remove Antigravity support. Meanwhile, competitors like OpenAI have appeared more permissive toward third-party harnesses, deepening philosophical splits across ecosystems.

The broader signal is unmistakable: AI platforms are shifting toward tighter control planes and clearer monetization boundaries.

There’s also a geopolitical layer. China’s Ministry of Industry and Information Technology recently warned about misconfigured AI agent systems creating systemic cybersecurity exposure.

Agentic AI is powerful. But power without governance scales risk just as efficiently as it scales productivity.

What You Should Do Right Now

- Review Google Antigravity Terms of Service – Validate architectural alignment with usage boundaries.

- Avoid OAuth Proxying for Production Systems – Use officially supported APIs.

- Audit Exposed Instances – Harden configs and rotate API keys.

- Monitor Account Activity Logs – Watch for abnormal token spikes.

- Diversify Model Providers – Reduce single-vendor dependency risk.

- Document Vendor Dependencies in Risk Assessments – Treat AI APIs as critical operational assets.

Most organizations won’t act until access disappears.

Timeline of Events

- January 2026 → OpenClaw launches publicly

- January 2026 → Growth surpasses 200K GitHub stars

- Mid-February 2026 → Developers report 403 errors

- February 23, 2026 → Varun Mohan addresses enforcement publicly

- Late February 2026 → Community pivots to forks like Nanobot and IronClaw

Final thoughts

The Google Antigravity suspension isn’t just a ToS dispute. It’s a preview of how AI ecosystems are maturing — and hardening.

Convenience-driven integrations feel harmless in early growth phases. They rarely stay that way once real infrastructure strain enters the picture.

The real question isn’t whether platforms will clamp down again. It’s whether your architecture can survive when they do.