ClawdBot, now operating under the name Moltbot, has quickly moved from a niche developer project to one of the most talked-about examples of an autonomous AI agent in 2026. Often promoted as a self-hosted, always-on AI employee, ClawdBot AI is designed to stay running in the background, remember context over time, and carry out real actions on behalf of its user.

At the same time, growing attention around ClawdBot security risks has triggered serious concern across the cybersecurity and AI research communities. Many experts now point to Moltbot AI as a real-world illustration of broader agentic AI security challenges situations where powerful automation capabilities are released faster than robust safety models can be applied. This article clearly explains what ClawdBot AI is, how it works in practice, and why its current design introduces meaningful autonomous AI agent risks for both individuals and enterprises.

What Is ClawdBot / Moltbot AI?

ClawdBot AI is an open-source, self-hosted AI agent built to run continuously on systems controlled by the user, including laptops, Mac minis, home lab servers, and cloud-based VPS environments. Unlike browser-based chatbots that only respond when prompted, a self-hosted AI agent such as Moltbot remains active at all times, maintaining memory and direct access to system resources.

Core Architecture of ClawdBot AI

From a practical standpoint, ClawdBot operates as a persistent AI agent connected to tools that allow it to interact directly with the digital world. These tools give the agent the ability to:

- Execute shell and system commands

- Read from and write to local filesystems

- Communicate with APIs and SaaS platforms

- Automate multi-step workflows across services

One of the most distinctive features of ClawdBot AI is its chat-based control plane. Users send instructions through familiar platforms such as WhatsApp, Telegram, Discord, Slack, Signal, iMessage, Microsoft Teams, and Google Chat. While this makes automation feel intuitive and conversational, it also dramatically increases the AI agent attack surface, since everyday messages can translate directly into executable actions.

Why Moltbot Was Rebranded

In January 2026, ClawdBot was officially renamed Moltbot following a trademark dispute with Anthropic, the organization behind the Claude AI models. Concerns were raised that the name “Clawd” was too similar to “Claude,” prompting a formal request for a name change.

The Moltbot rebrand was framed as a symbolic evolution rather than a reset. From a security perspective, however, the same issues highlighted in earlier Moltbot security analysis remained unchanged. The underlying architecture and its associated risks continued exactly as before, making the rebrand largely cosmetic rather than corrective.

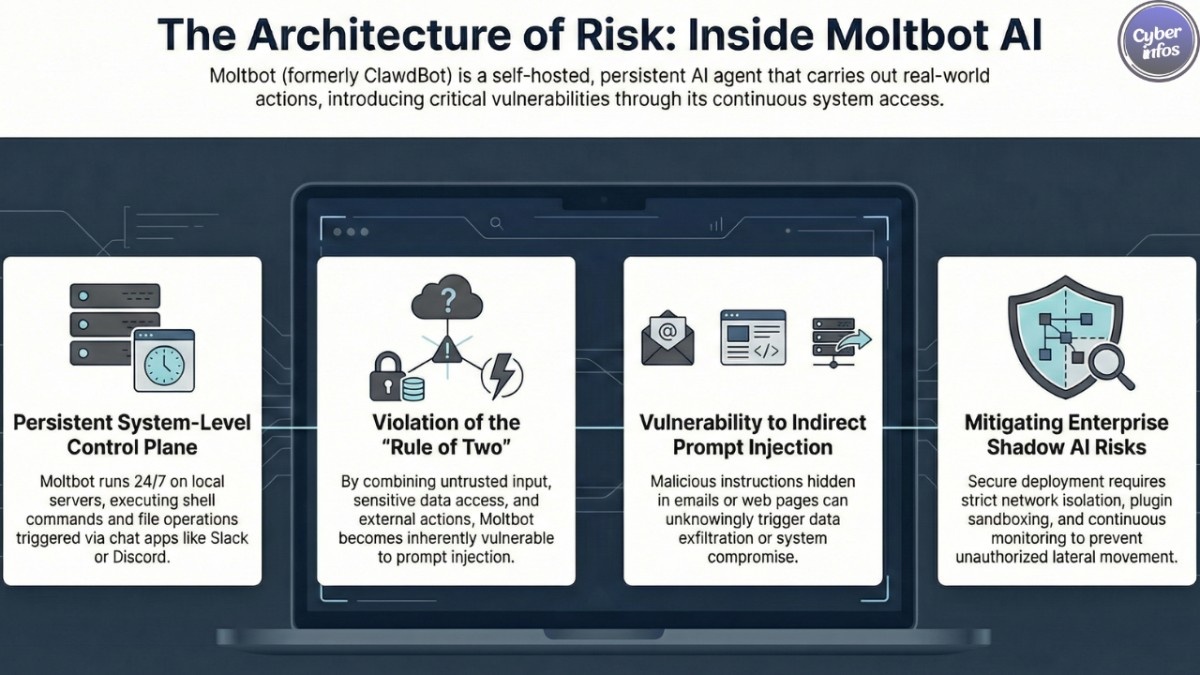

The Core Security Design Problem

The most serious ClawdBot security risks are not caused by simple bugs or implementation mistakes. Instead, they stem from fundamental design decisions baked into how the autonomous agent operates.

The Agents “Rule of Two” and Agentic AI Security

Security researchers introduced the Agents Rule of Two as a guiding principle for safer autonomous AI systems. The rule suggests that an AI agent should avoid simultaneously doing all three of the following:

- Processing untrusted input

- Accessing sensitive data

- Taking external actions

When an AI system combines all three, it becomes especially vulnerable to prompt injection attacks.

How ClawdBot Violates This Principle

ClawdBot AI breaks this rule by default:

- It processes untrusted input from chats, emails, web pages, and social media

- It accesses sensitive data such as credentials, inboxes, files, and API keys

- It performs external actions including system command execution, file deletion, and message posting

This combination creates a fragile AI agent cybersecurity environment where a single malicious instruction can escalate into data loss, service abuse, or even complete system compromise.

Exposed Control Panels and Credential Leakage

One of the most concerning findings in recent Moltbot security analysis is the number of internet-exposed ClawdBot instances discovered online.

How These Exposures Happen

ClawdBot assumes that any request coming from 127.0.0.1 is trusted. When users deploy the agent behind reverse proxies or improperly configured firewalls, that assumption breaks down. External traffic can be mistaken for local traffic, allowing attackers to bypass authentication controls entirely. As a result, hundreds of exposed control panels have been discovered and accessed remotely.

Types of Data Exposed

- AI API keys and service tokens

- OAuth credentials connected to email and messaging services

- Full conversation histories from private chat platforms

- System-level command execution access

These weaknesses make ClawdBot an attractive target for AI credential leakage and large-scale data exfiltration attacks.

Prompt Injection Attacks in Real-World Scenarios

Among the various threats affecting ClawdBot AI, prompt injection attacks are the most immediate and damaging.

Direct Prompt Injection

In direct prompt injection attacks, adversaries send carefully crafted instructions through chat messages or emails. Because ClawdBot does not strongly isolate system instructions from user input, these prompts can override intended behavior. Documented outcomes include deleted inboxes, unauthorized messages sent on behalf of users, and unintended system command execution.

Indirect Prompt Injection

Indirect prompt injection presents an even greater danger. In these cases, malicious instructions are hidden inside content the autonomous AI agent processes automatically, such as:

- Emails

- Web pages

- Social media replies

When ClawdBot reads this content as part of normal workflows, it may unknowingly execute attacker-controlled instructions, further expanding the AI agent attack surface.

Malicious Plugins and Skills Ecosystem Risks

ClawdBot allows functionality to be extended through community-created “skills.” However, there is no mandatory sandboxing, permission framework, or enforced security review.

This creates a serious AI malware attack vector:

- Malicious skills can quietly harvest credentials

- Plugins may execute arbitrary system commands

- Backdoors can persist undetected within the agent

From a defensive standpoint, the ClawdBot skills ecosystem resembles an unsandboxed extension marketplace with full access to the host system.

Shadow AI and Enterprise Security Risks

Inside organizations, ClawdBot is a clear example of rising shadow AI risk.

Why Shadow AI Is More Dangerous Than Shadow IT

- Operate independently with minimal supervision

- Execute commands across multiple systems

- Move, transform, and share data automatically

- Often generate little to no audit visibility

An employee can deploy a self-hosted AI agent like Moltbot in minutes, creating a powerful automation layer invisible to IT and security teams. This introduces serious enterprise AI security risks, including compliance violations, data exposure, and new lateral movement paths for attackers.

Risk Summary: Why ClawdBot AI Is High Risk

- Persistent background execution

- Broad access to sensitive systems and data

- Continuous handling of untrusted input

- Minimal security controls enabled by default

Together, these factors create an environment where a single prompt injection vulnerability can escalate into full compromise, credential theft, or widespread data leakage.

Final Recommendations

ClawdBot and Moltbot should be treated as high-risk autonomous AI infrastructure, not as casual productivity tools. Any deployment should include:

- Strong authentication and strict access controls

- Network isolation and hardened firewall configurations

- Secure storage and management of secrets

- Plugin sandboxing and least-privilege enforcement

- Continuous monitoring, logging, and auditing

Until its architecture aligns with established agentic AI security best practices, individuals and organizations should approach ClawdBot AI with caution.

ClawdBot offers a glimpse into the future of automation but it also makes clear that securing autonomous AI agents must come before scaling them.

Disclaimer

This article is provided for informational and educational purposes only and reflects publicly available research and analysis at the time of writing. It does not constitute professional cybersecurity, legal, or compliance advice.

While care has been taken to ensure accuracy, technologies, security postures, and risk profiles may change over time and vary by deployment. References to ClawdBot AI, Moltbot AI, or related platforms are made solely for discussion and research, not endorsement or affiliation. Readers should conduct independent assessments and consult qualified professionals before deploying autonomous or self-hosted AI systems. The author assumes no liability for any actions taken based on this content.