Most pentest AI tools still act generic.

Here is why that fails. Pentest ai agents replaces the “one model does everything” idea with 28 focused operators mapped to real offensive workflows. Instead of guessing intent, it routes tasks to agents built for recon, exploitation, or reporting.

By the third prompt, the difference shows pentest ai agents behaves like

a coordinated team, not a chatbot. So what actually changes when AI mirrors how red teams operate?

In this article: how the framework works, what each agent category does, how execution stays controlled, and where this fits in real-world engagements using AI for penetration testing.

What is pentest ai agents?

Pentest ai agents is an open-source toolkit that converts Claude Code into a structured penetration testing assistant for authorized engagements.

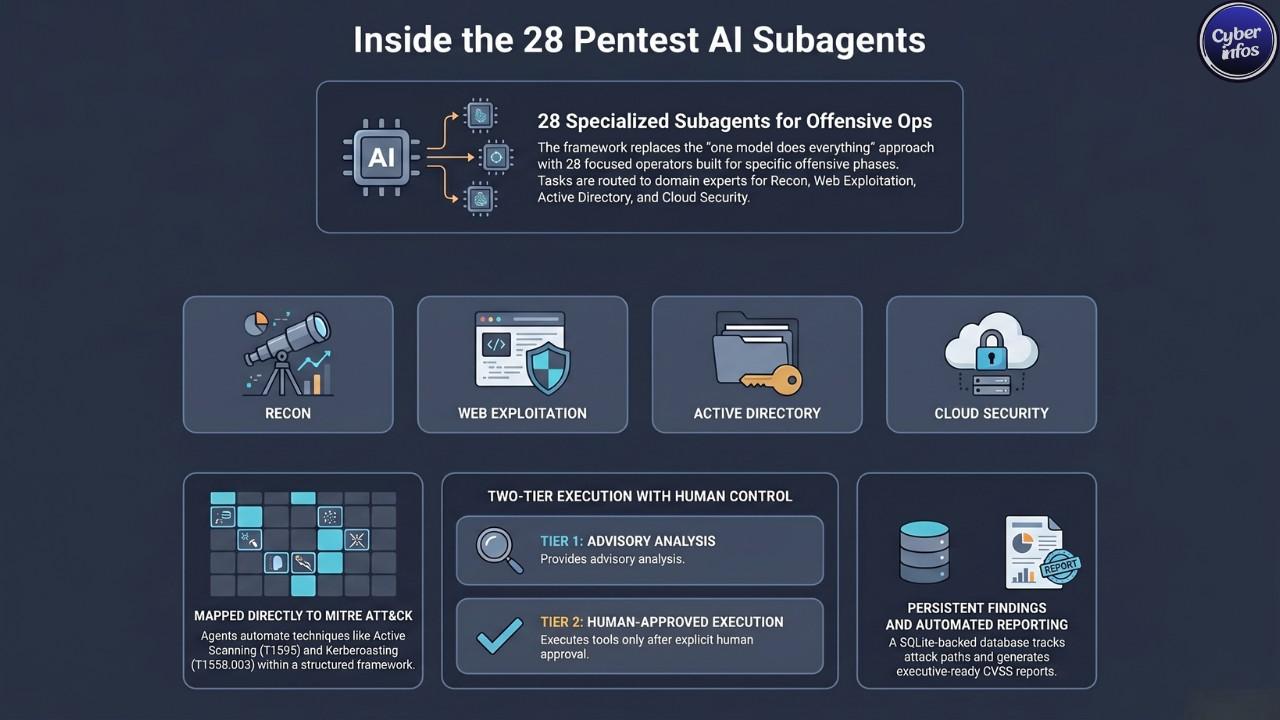

It deploys 28 subagents, each aligned to a specific testing phase like reconnaissance, web exploitation, Active Directory attacks, cloud security, and reporting.Each task goes to a specialist. That alone changes workflow efficiency.

Released by security researcher 0xSteph, the toolkit integrates locally with minimal setup. Prompts are automatically routed based on intent. You ask for recon analysis, it invokes recon logic. You ask for AD abuse paths, it switches context immediately.

From a SOC analyst perspective, this resembles real escalation paths. Alerts don’t go to generalists they move to domain experts. What threat intelligence consistently shows is that attackers operate in phases. This framework mirrors that structure directly, forming an AI pentest toolkit built around real workflows.

One-command install and zero-server setup

Installation runs through a single command:

curl -fsSL https://raw.githubusercontent.com/0xSteph/pentest-ai-agents/main/install.sh

| bash offical github link: clickThe script clones the repository and installs agent definitions into ~/.claude/agents/.

Run it again safely to update. No external infrastructure required.

Flags like --project isolate agents per engagement. That matters when handling multiple clients with strict separation. The --global --lite option shifts advisory logic to lower-cost models, reducing token consumption in long engagements.

In practice what I see is teams abandoning tools because setup becomes friction. This avoids that entirely. And that is exactly what attackers count on.

How claude code subagents work behind the scenes

Each subagent is a Markdown file with structured YAML defining role, scope, and behavior. Claude reads these definitions and maps prompts to the right agent.

- Simple design.

- Effective execution.

This removes context overload. Instead of carrying long conversations, tasks are segmented. Recon remains recon. Exploitation stays isolated.

From a SOC analyst perspective, context separation reduces analyst fatigue and error rates. When tools blur phases, mistakes follow.

The logs existed. Nobody was watching them.

Inside the 28 claude code subagents

The toolkit splits agents into offensive operations and reporting/learning roles.

Offensive operations agents

These agents handle the active attack lifecycle.

- Engagement Planner builds structured testing plans mapped to MITRE ATT&CK techniques like T1595 (Active Scanning).

- Recon Advisor analyzes outputs from Nmap, Nessus, and BloodHound, identifying attack surfaces tied to T1046 (Network Service Discovery).

- OSINT Collector gathers external intelligence, mapping to T1593 (Search Open Websites/Domains).

- Web Hunter performs fuzzing and injection discovery using tools like

ffuf,sqlmap, anddalfox, aligned with T1190 (Exploit Public-Facing Applications). - Vuln Scanner orchestrates automated scanning tools and prioritizes findings based on CVSS scoring.

- AD Attacker focuses on Kerberoasting (T1558.003), delegation abuse, and ACL exploitation (T1484).

- Privilege Escalation covers SUID abuse (T1548.001) and token impersonation (T1134).

- Cloud Security Agent evaluates IAM privilege escalation paths in AWS and Azure environments.

- Business Logic Hunter targets race conditions and workflow bypasses—issues traditional scanners miss.

- Exploit Chainer builds multi-step attack paths combining vulnerabilities into realistic intrusion scenarios.

Attack chains define real risk. Single vulnerabilities rarely do. From my own experience handling incident response, attackers rarely stop at initial access. They chain misconfigurations until they reach domain dominance.

Detection happened. Response did not.

Reporting and learning agents

These agents focus on output and knowledge transfer.

- Report Generator produces structured reports with executive summaries, CVSS scoring, and remediation steps.

- CTF Solver assists in solving training challenges across multiple domains.

In practice what I see is reporting becoming the bottleneck in engagements. Analysts spend hours translating technical findings into business language. This agent reduces that effort significantly.

Two-tier execution model: advisory vs command execution

The framework separates analysis from action.

Tier 1 – advisory agents

Tier 1 agents analyze outputs and recommend next steps. They never execute commands. You stay in control.

This mode works well in restricted environments where execution must remain manual. What threat intelligence consistently shows is that uncontrolled automation introduces risk faster than it improves efficiency.

Tier 2 – tool-executing agents

Tier 2 agents can generate and execute commands, but only after explicit approval. Every command is visible before execution. Nothing runs silently.

These agents operate tools like:

- Nmap (T1046)

- Nuclei (T1595)

- BloodHound (T1482 – Domain Trust Discovery)

- sqlmap (T1190)

From experience, I have seen automated scripts cause unintended outages during engagements. Human approval gates reduce that risk significantly. And that is exactly what defenders overlook, even when using an offensive security AI assistant.

Persistent findings with a Sqlite-backed database

The findings.sh script stores engagement data in a SQLite database. Lightweight storage. High operational value.

This includes targets, vulnerabilities, credentials, and attack paths. It enables multi-day testing without losing context. From a SOC analyst perspective, losing context between sessions is a recurring issue. Persistent storage solves that.

The logs existed. Nobody correlated them.

From findings to client-ready reports

The Report Generator converts raw findings into structured documentation.

Typical output includes:

- Executive summaries for stakeholders

- Detailed vulnerability breakdowns

- CVSS scoring

- Remediation recommendations

Reports become faster to produce.

In practice what I see is consultants spending up to 40% of engagement time writing reports. Automation reduces that overhead while maintaining structure.

Detection happened. Response did not.

Mcp server, ci/cd integration, and local model support

The companion MCP server exposes over 150 tools for orchestration.

This enables:

- Automated scanning pipelines

- CI/CD integration (Jenkins, GitHub Actions)

- Continuous security validation

Testing shifts left. Earlier detection reduces impact.

The toolkit also supports local model deployment using OpenCode and tools like Ollama, allowing air-gapped environments to operate securely with MCP integration for pentesting.

why pentest-ai-agents matters for pentesters and students

For professionals, it standardizes methodology across engagements. Teams can reuse structured workflows instead of reinventing approaches.

For students, it acts as an interactive mentor.

But here is the real question: are you understanding the methodology or just following instructions?

From a SOC analyst perspective, over-reliance on automation without understanding underlying techniques leads to blind spots.

Expert analysis: why this matters

What stands out here is not automation it is structured delegation.

A similar shift occurred with BloodHound adoption. Teams moved from manual enumeration to graph-based attack mapping. This framework applies that same concept to AI-assisted workflows.

What the official statement does not say is how much cognitive load this removes. Analysts no longer need to track every phase mentally. The system segments tasks automatically.

Most guides tell you AI will replace pentesters. Here is why that is incomplete: it replaces repetitive execution, not analytical thinking.

One major defender mistake is assuming tools will correlate attack stages. They rarely do. Attack chains across recon, exploitation, and escalation often go unnoticed.

From my own incident response experience, lateral movement (T1021) combined with privilege escalation (T1548) frequently bypasses detection because logs are siloed.

Prediction: within 12 to 18 months, multi-agent AI workflows will become standard in red team operations, especially in cloud environments. The scale of cloud attack surfaces is growing faster than human teams can handle.

Full forensic details are not yet public this analysis is based on available indicators and comparable attack patterns.

Security, Governance, and Ethical use

Human oversight must remain enabled at all times.

Scope must be strictly defined. Unauthorized testing introduces legal and operational risk. MCP servers should be treated as high-value assets with proper authentication, logging, and network controls.

Automation without governance creates exposure. And that is exactly what attackers exploit.

Getting started with pentest-ai-agents

- Install in a lab environment first.

- Begin with Tier 1 advisory mode.

- Enable Tier 2 agents gradually with strict scope.

- Use

findings.shfor persistence. - Integrate MCP after validating workflows.

One question remains: will you validate outputs—or trust them blindly?

Final thoughts

Three takeaways: First, multi-agent design aligns directly with real pentesting workflows.

Second, controlled execution prevents unsafe automation.

Third, attack chain correlation exposes real risk beyond individual vulnerabilities.

Over the next 12–18 months, expect deeper CI/CD integration and wider adoption in cloud security testing.

AI will not replace pentesters it will expose who understands the attack surface, especially when using pentest ai agents.